After Mythos: Identity Has to Anchor in Hardware

Mike Malone

TL;DR. AI-assisted vulnerability discovery is operationally real, and the disclosure window most security programs depend on is compressing fast. The assumption that breaks first is credential portability: any secret a host can hold, an attacker can lift and replay elsewhere. Hardware-bound identity, short-lived certificates, and continuous verification are how trust holds up when software trust does not. This is a solvable problem. The pieces exist. The infrastructure to connect them is what's missing.

Most of the discussion since Anthropic's Mythos announcement has been about Anthropic. The thing worth holding onto is smaller, quieter, and has very little to do with which lab's name is on the press release.

AI-assisted vulnerability discovery is operationally real. In roughly a month of pre-release testing, Mythos found a 27-year-old bug in OpenBSD — an operating system that has been studied by serious people for decades. It found a 16-year-old bug in FFmpeg in a line of code that automated testing tools had hit five million times without catching the problem. It chained primitives in the Linux kernel into a working privilege escalation. Anthropic restricted access through Project Glasswing, a coalition of industry partners including Microsoft, Google, Apple, CrowdStrike, and others. That is a policy choice, and reasonable people will argue about whether the restrictions hold.

The capability itself does not depend on those restrictions. Other labs are building similar models. Independent evaluations have shown that smaller open-weight models can already replicate parts of the same analysis at a small fraction of the cost. This category of capability is operational, accessible, and improving fast.

The CVE feed was always a lagging indicator

The vulnerability management workflow most security programs depend on assumes a window. A bug is discovered. It is responsibly reported. A CVE is issued. A patch ships. Defenders apply the patch before attackers weaponize it.

Every scanner, every compliance framework, every cyber insurance policy is built on that sequence holding. When the window is days or weeks, it works often enough. It is what the industry built around.

The window is compressing. It has been compressing for a while, and AI-assisted discovery is compressing it further. The evidence is strongest on the discovery side — fully autonomous, end-to-end exploit generation at industrial scale is not yet the norm, even if the trend line is moving toward it.

The CVE feed is not going away. But for a growing class of high-value vulnerabilities, it is drifting from early warning toward retrospective record. Plenty of CVEs still arrive ahead of exploitation. The ones that do not are the ones that decide your architecture.

Some teams have already adjusted to this. Most have not.

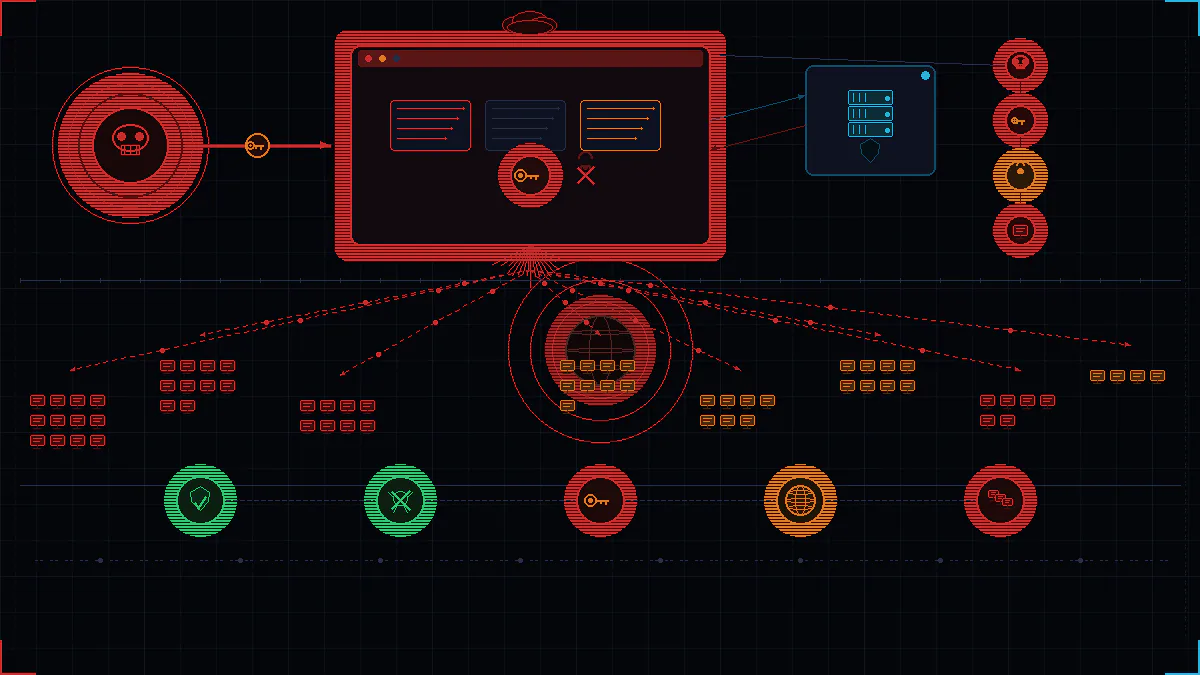

The assumption that breaks first

Patching faster matters. It does not, on its own, address the harder question: which assumptions stop earning their keep?

The first assumption to fail is that a credential issued to a piece of software remains trustworthy as long as the credential itself has not been revoked. That assumption was always doing more work than it should. It holds on the premise that the host where the credential lives is a stable, uncompromised platform. If the host can be silently compromised before disclosure, before a patch exists, before anyone has reason to suspect it, then a credential whose security depends on the host being clean is operating on borrowed time.

This is not a new problem. Infostealer malware has been industrialized for years, with a credential marketplace behind it that operates at scale. AI-accelerated discovery raises the base rate of compromise across endpoints whose patching cadence cannot keep up. It does not introduce a new class of attack so much as widen one that already exists.

The problem is portability. Passwords, bearer tokens, API keys, exported certificates, VPN secrets, session cookies. Any secret that can be read off a machine and reused on a different one. The constraints stacked on top of these — token binding, DPoP, audience restrictions, device posture checks — all push back on replay, but they are working around a property that hardware-bound keys do not have in the first place.

An infostealer pulling browser session cookies off a compromised laptop is fundamentally a portability attack. The value comes from replaying the credential from infrastructure the attacker controls. An exfiltrated AWS access key is the same shape of problem. Whoever holds the key bytes is, for practical purposes, the principal. An OAuth refresh token captured from a developer's machine and used from somewhere else is the same shape on a different protocol.

The failure mode that shows up most often in production environments: an access architecture that treats the credential as the proof of identity, and the host where the credential lives as incidental. That architecture is leaning on something that is getting weaker, in an environment that is getting harder.

What anchors when software does not

If software trust is becoming transient, the load has to move somewhere that is not transient.

That somewhere is hardware. A private key generated inside a TPM or a Secure Enclave does not leave the chip. It cannot be exfiltrated and replayed from elsewhere. That single property changes the attacker's economics in a way nothing else in the stack does.

It does not make the device invulnerable. An attacker with runtime control can still invoke the key through legitimate APIs while they maintain execution on the host. What they cannot do is take it home, sell it, or use it from infrastructure they control. The attack loses its lateral leverage. It loses the property that makes credential theft profitable at scale: portability.

ACME Device Attestation is the protocol that makes this practical at fleet scale. The device cryptographically proves its identity using hardware-bound keys before a certificate is issued. The certificate is short-lived and rotated automatically. The private key stays inside the secure element and never touches process memory. An exported key, by contrast, lives in process memory and can be read by whatever else has access — and increasingly, by whatever AI-assisted tooling an attacker has pointed at the host.

This is what "phishing-resistant" should mean. It is also what "Zero Trust device identity" should mean. Both phrases have been used loosely enough that the technical content has gotten thin — and that is a problem, because the architectural decisions underneath them are real and they matter.

Continuous trust, not periodic trust

A certificate that lives ninety days is a different kind of object than one that lives an hour. They authenticate the same thing. Only one of them remains authoritative if the device is compromised at minute thirty.

Long-lived credentials make sense in a world where compromise is a rare event you respond to incident-by-incident. They stop making sense in a world where the base rate of latent compromise is rising and you cannot reliably tell which machines are clean. The defensive answer is to make the half-life of any individual credential short enough that a compromised one does not stay useful for long.

In systems built around hour-long certificates instead of quarter-long ones, rotation becomes the primary defense and revocation becomes the exception path. PKI teams who have spent careers tuning revocation infrastructure end up tuning issuance infrastructure instead. It is a different kind of operational problem — and a more tractable one.

The same transition is already visible in consumer authentication. Passkeys are replacing passwords by binding authentication to hardware-backed cryptographic keys instead of portable shared secrets. The enterprise device-identity stack is going through the same shift on a different timeline.

This reframes what continuous verification actually means. The question is not "is this device trusted." The question is: "is this device, right now, presenting a certificate issued moments ago, against a key that cannot leave the hardware, on a host that just attested to its state?" The second question has a real answer. The first one does not.

What this does not solve

Hardware-bound identity is not magic. It does not stop endpoint compromise. A legitimate user can still be socially engineered out of a session, and a malicious insider with sanctioned access still has sanctioned access. What hardware-bound identity does is push attacks upward into session hijacking and live-on-device abuse — where the attacker has to be on the box, actively using the credential, while the box is online.

The cheap, scalable attacks — credential theft, replay, fan-out from attacker-controlled infrastructure — get much harder. The remaining attacks are harder to execute at scale, even if they are still hard to detect. Identity is also not the whole control plane. Segmentation still matters. So do memory safety, exploit mitigation, behavioral detection, and least privilege. The argument is not that identity replaces them. The argument is that, under AI-accelerated exploitation, cryptographically verifiable identity is the layer most security architectures have been most casual about — and the one whose assumptions are degrading fastest.

What this looks like in practice

The pieces are already deployable. Most enterprise fleets have TPMs. Most Macs have Secure Enclaves. Most enterprise identity stacks can integrate with certificate-based authentication and connect it to Okta, Jamf, and the privileged access systems that gate the things attackers actually want.

What is usually missing is the issuance and rotation infrastructure that makes ACME Device Attestation work at fleet scale — and the wiring that ties short-lived certificates to the systems that grant access. That is the operational gap. It is also less dramatic than the threat picture suggests.

Smallstep is built specifically for this problem. The platform automates certificate issuance and renewal, enforces hardware attestation before any certificate is issued, and integrates with the device management and identity systems enterprises already run. The private key stays in the hardware. The certificate rotates on a schedule short enough that a compromised credential is stale before it can do damage. Revocation becomes the backstop, not the front line.

The work of closing the gap between identity claims and hardware proof — of replacing portable secrets with non-exportable ones — is real engineering work. It is not glamorous. But it is the work that makes the architecture hold up.

The right reaction to AI-accelerated vulnerability discovery is not panic. The security community has lived through accelerations before. What changes now is which of the old assumptions still earn their keep.

The assumption that software stays trustworthy long enough for slow-moving credentials to be safe was always optimistic. It will probably be true less often going forward.

You cannot patch your way out of this one. The load has to move somewhere harder to budge. The systems that hold up in this environment will be the ones that continuously re-establish trust faster than compromise can spread. The infrastructure to do that exists. Using it is a decision.

Mike Malone has been working on making infrastructure security easy with Smallstep for six years as CEO and Founder. Prior to Smallstep, Mike was CTO at Betable. He is at heart a distributed systems enthusiast, making open source solutions that solve big problems in Production Identity and a published research author in the world of cybersecurity policy.